BOSTON — Scientists at Tufts University have unveiled a neuro-symbolic artificial intelligence approach that slashes training energy consumption by a factor of 100, a breakthrough that could reshape the economics of an industry increasingly defined by its staggering power demands.

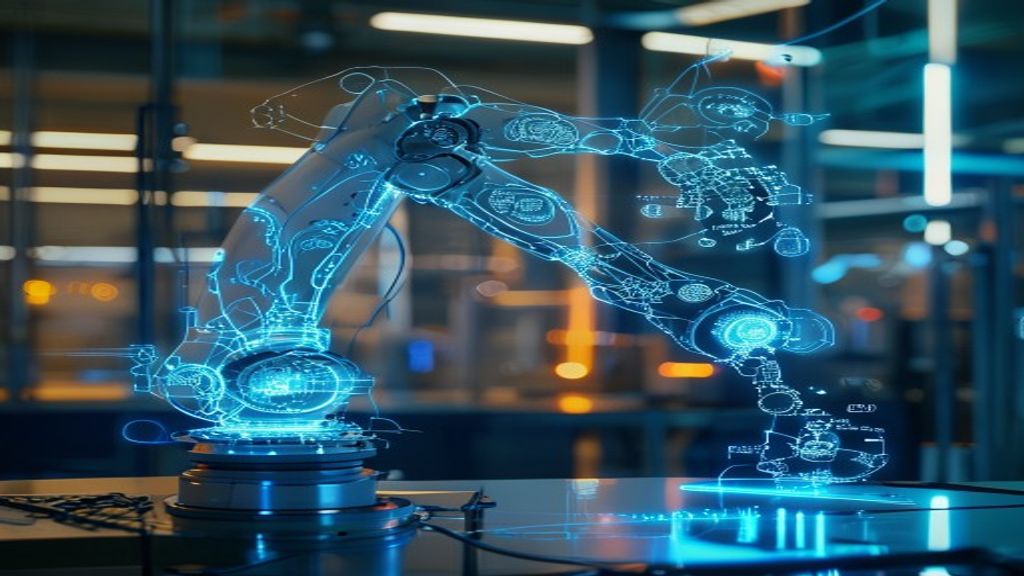

The research, published on April 5, 2026, and led by Professor Matthias Scheutz at the Tufts School of Engineering, combines traditional neural networks with symbolic reasoning — essentially teaching AI systems to follow logical rules rather than relying purely on brute-force trial and error. The results are striking: robots trained using the new method achieved a 95 percent success rate in just 34 minutes, compared to a 34 percent success rate over more than 36 hours using conventional deep reinforcement learning. The findings arrive at a moment when the AI industry’s appetite for electricity has become a first-order policy concern across governments and boardrooms worldwide.

| Parameter | Details |

|---|---|

| Lead Researcher | Professor Matthias Scheutz, Tufts University School of Engineering |

| Method | Neuro-symbolic AI combining neural networks with symbolic reasoning |

| Training Time Reduction | 34 minutes vs. 36+ hours (conventional methods) |

| Success Rate | 95% (neuro-symbolic) vs. 34% (standard approach) |

| Energy Reduction Factor | Approximately 100x less energy consumed |

| AI Share of US Electricity | More than 10% and rising |

| Projected Global Data Center Investment | $7 trillion |

Situational Breakdown

The core innovation lies in how the neuro-symbolic system constrains the learning process. Traditional deep reinforcement learning trains AI models by allowing them to attempt millions of random actions, gradually reinforcing those that produce desired outcomes. It is effective but extraordinarily wasteful — the computational equivalent of having a student guess answers to every possible question rather than studying the textbook first. Scheutz’s approach introduces symbolic rules that dramatically narrow the search space, telling the neural network which actions are logically impossible or counterproductive before training even begins. — ScienceDaily

The practical demonstration involved robotic manipulation tasks, where the neuro-symbolic system not only learned faster but performed substantially better. A 95 percent success rate against 34 percent is not a marginal improvement; it represents a fundamentally different quality of outcome. The 34-minute training window, compared to a day and a half of conventional compute, translates directly into reduced electricity consumption, lower cooling requirements, and fewer GPU hours — the three factors that dominate AI infrastructure costs. — Tufts University

The timing of the publication is significant. AI systems now consume more than 10 percent of total US electricity generation, a figure that has roughly doubled in the past 18 months as large language models, image generators, and autonomous systems proliferate across industries. Data center operators are scrambling to secure power purchase agreements, and in several US states, utilities have begun delaying residential renewable energy projects to prioritize industrial AI loads. — TechStartups

The Energy Crisis Behind the Breakthrough

To understand why this research matters, one must first grasp the scale of the problem it addresses. The global data center buildout required to sustain current AI growth trajectories is projected to cost $7 trillion in infrastructure investment over the coming decade. That figure encompasses not just server hardware but the power plants, transmission lines, water cooling systems, and land acquisitions necessary to keep the machines running.

“AI data centers are on track to require $7 trillion in infrastructure investment, making energy efficiency a critical bottleneck.” — TechStartups

The environmental implications are equally stark. While major technology companies have pledged carbon neutrality, the explosive growth in AI compute has pushed several — including Microsoft and Google — to revise their emissions targets upward. Nuclear energy, once considered too controversial for the tech sector, is now being actively pursued by multiple hyperscalers as the only carbon-free source capable of delivering gigawatts of baseload power. Any technology that reduces AI energy consumption by two orders of magnitude does not merely improve efficiency; it potentially reshapes the entire infrastructure equation.

How Neuro-Symbolic AI Actually Works

The neuro-symbolic approach is not entirely new as a concept, but its application to training efficiency at this scale represents a genuine advance. The method works by layering symbolic logic — the kind of structured, rule-based reasoning that dominated early AI research in the 1970s and 1980s — on top of modern neural network architectures. The symbolic layer acts as a filter, eliminating vast swathes of the action space that pure neural approaches would otherwise explore blindly.

“The neuro-symbolic approach applies rules that limit trial and error during learning, drastically reducing computational overhead.” — ScienceDaily

Consider the robotic training scenario: a conventional system might attempt thousands of physically impossible movements — reaching through solid objects, applying negative force, rotating joints beyond mechanical limits — before learning to avoid them. The symbolic layer encodes these constraints from the outset, allowing the neural network to focus its limited compute budget on actions that could actually succeed. The result is not just faster training but better outcomes, because the model spends its capacity learning useful behaviours rather than discovering obvious impossibilities.

Industry Implications and Sceptical Voices

The research has generated significant interest among AI infrastructure planners, but experts caution against extrapolating robotics results directly to the large language model training pipelines that consume the bulk of global AI compute. Robotic manipulation tasks have well-defined physical constraints that lend themselves naturally to symbolic encoding. Language, vision, and multimodal reasoning operate in far more ambiguous domains where crafting symbolic rules is exponentially harder.

Nevertheless, the principle — that hybrid approaches combining structured reasoning with learned representations can achieve superior efficiency — has broad applicability. Several research groups at MIT, Stanford, and DeepMind have published related work exploring neuro-symbolic methods for scientific simulation, drug discovery, and autonomous driving. If the Tufts results catalyse a broader shift toward hybrid training paradigms, even partial efficiency gains across the industry could save billions of dollars and terawatt-hours of electricity annually.

The Geopolitical Dimension

Energy-efficient AI is not merely a technical or economic question — it is rapidly becoming a geopolitical one. Nations with constrained power grids stand to benefit disproportionately from breakthroughs that reduce AI’s energy footprint. The ongoing tensions in the Strait of Hormuz, through which roughly 20 percent of global oil shipments transit, have already disrupted energy markets across South and Central Asia. As mediators propose a 45-day US-Iran ceasefire to stabilise the region, the vulnerability of energy-dependent AI strategies has become painfully apparent.

Countries that can train competitive AI systems on a fraction of the power will hold a structural advantage in the emerging technology landscape. Conversely, nations locked into energy-intensive training paradigms will find themselves increasingly dependent on stable fossil fuel supply chains — a dependency that carries both economic and strategic risks in an era of accelerating climate policy and regional conflict.

🇵🇰 Pakistan Connection

Pakistan’s relevance to this breakthrough is direct and urgent. The country is currently rationing electricity amid the Hormuz Strait crisis, with mandatory early market closures imposed across major cities to manage grid strain. In this environment, energy-efficient AI is not an abstract research interest — it is a prerequisite for Pakistan’s stated ambition of training one million AI professionals and growing its digital economy to seven percent of GDP by 2030.

If neuro-symbolic methods prove scalable beyond robotics, Pakistan could potentially build competitive AI training infrastructure without the massive power investments that have made the field prohibitively expensive for developing economies. A 100-fold reduction in training energy would transform the calculus entirely, allowing Pakistani universities and startups to train models on existing grid capacity rather than waiting for power generation that may take years to materialise. The intersection of Pakistan’s AI ambitions and its energy constraints makes it one of the countries with the most to gain from this line of research.

BolotosAI Assessment

The Tufts neuro-symbolic research represents a genuine inflection point, but its ultimate impact depends on scalability. Three outcomes deserve close attention in the months ahead.

First, watch for replication studies. A single result from one laboratory, however impressive, must be validated across different task domains and at different scales before the industry will shift training paradigms. If independent groups confirm similar efficiency gains in language or vision tasks by mid-2026, expect rapid commercial adoption.

Second, monitor the response from hyperscalers. Companies like Microsoft, Google, and Amazon have committed tens of billions to data center construction. If neuro-symbolic approaches reduce compute requirements by even a fraction of what the Tufts team demonstrated, some of those capital plans may be revised downward — a development that would ripple through energy markets, real estate, and semiconductor supply chains.

Third, observe how developing nations respond. Countries with constrained power grids — Pakistan, Bangladesh, several African and Southeast Asian economies — have the strongest incentive to adopt energy-efficient training methods. If neuro-symbolic AI democratises access to competitive model training, the geography of AI leadership could shift significantly away from its current concentration in North America and China. The bottleneck has always been energy. This research suggests the bottleneck may be thinner than anyone assumed.